Enhancing Digital Accessibility Through the Implementation of the Web Speech API and Speech Synthesis Technology

The modern digital landscape is undergoing a significant transformation as standards bodies and browser developers prioritize inclusive design to accommodate a diverse global user base. Among the suite of tools available to developers, the Web Speech API—specifically the speechSynthesis interface—stands out as a powerful yet frequently underutilized resource for enhancing the browsing experience of unsighted or visually impaired individuals. By providing a standardized method for browsers to programmatically convert text into audible speech, this API bridges the gap between static content and interactive, accessible communication.

The core functionality of the speechSynthesis API allows developers to direct the browser to "utter" specific strings of text using the window.speechSynthesis controller in conjunction with the SpeechSynthesisUtterance object. A basic implementation involves passing a string to the utterance constructor and calling the speak method, which triggers the browser’s native text-to-speech (TTS) engine. While the output may occasionally retain a mechanical or "robotic" quality depending on the underlying operating system’s voice library, the API is now natively supported across all modern web browsers, including Google Chrome, Mozilla Firefox, Apple Safari, and Microsoft Edge. This universal compatibility marks a significant milestone in the effort to democratize web accessibility.

Historical Context and the Evolution of Web Standards

The journey toward a vocalized web began in the early 2010s when the World Wide Web Consortium (W3C) first began exploring speech-based interfaces. The Web Speech API was initially proposed to enable developers to incorporate speech recognition and synthesis into web pages without requiring external plugins or heavy third-party libraries. In 2012, the W3C Speech API Community Group published its initial draft, aiming to provide a roadmap for browser vendors to implement these features.

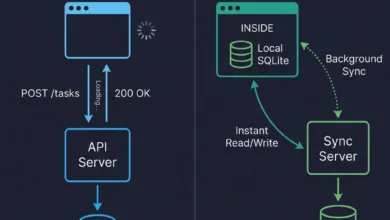

Historically, web accessibility relied almost exclusively on external assistive technologies, such as screen readers (e.g., JAWS, NVDA, or VoiceOver). These tools scan the Document Object Model (DOM) and relay information to the user. However, the introduction of speechSynthesis provided a way for web applications to offer built-in auditory feedback that complements these native tools. By 2016, the majority of evergreen browsers had implemented stable versions of the synthesis interface, allowing for a more seamless integration of voice-guided navigation and content delivery.

Technical Framework: How Speech Synthesis Operates

At its technical core, the speechSynthesis API operates as a controller for the text-to-speech service of the host device. The process begins with the creation of a SpeechSynthesisUtterance instance, which contains the text that will be spoken. This object is highly configurable, allowing developers to adjust various parameters to improve the clarity and tone of the delivery. Key properties include:

- Pitch: Adjusts the frequency of the voice, allowing for higher or lower tones.

- Rate: Controls the speed of the speech, which is crucial for users who may require slower delivery for comprehension or faster delivery for efficiency.

- Volume: Sets the loudness of the output.

- Voice: Allows the selection of specific voice profiles available on the user’s system, ranging from different accents to different languages.

Furthermore, the window.speechSynthesis.getVoices() method provides a list of all available voice engines on the device. This is particularly significant in a globalized web environment, as it allows developers to detect the user’s locale and provide speech in their native language, provided the operating system has the corresponding voice pack installed. The API also includes event listeners, such as onstart, onpause, and onend, which enable developers to synchronize on-screen animations or state changes with the auditory output.

Supporting Data on Accessibility and Global Impact

The drive for wider adoption of the Web Speech API is supported by compelling data regarding global disability and web usage. According to the World Health Organization (WHO), more than 2.2 billion people worldwide have a near or far vision impairment. In the United States alone, the American Foundation for the Blind reports that millions of adults experience significant vision loss, a demographic that is increasingly reliant on digital services for daily tasks, education, and employment.

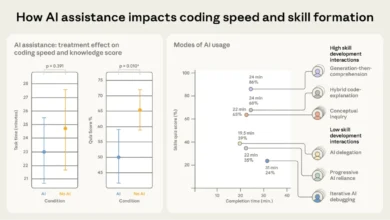

Despite the availability of accessibility tools, a 2023 report by WebAIM (Web Accessibility in Mind) analyzed the top one million homepages and found that 96.3% of them had detectable WCAG 2 (Web Content Accessibility Guidelines) failures. The underutilization of built-in APIs like speechSynthesis contributes to this gap. Industry analysts suggest that if developers integrated basic auditory cues via the Web Speech API, user retention among the visually impaired community could increase significantly. Data from various UX research firms indicates that "voice-assisted" browsing reduces cognitive load for users with learning disabilities, such as dyslexia, by allowing them to hear the text as they follow along visually.

Industry Reactions and Official Perspectives

The tech industry’s response to the Web Speech API has been one of cautious optimism and gradual integration. Major players like Google and Microsoft have championed the API as part of their broader commitment to "Inclusive Design." In official documentation, Google’s Chrome team emphasizes that the API should be used to "augment the user experience," rather than replace the fundamental accessibility layer provided by ARIA (Accessible Rich Internet Applications) labels and semantic HTML.

Accessibility advocates, including members of the A11y Project, have noted that while speechSynthesis is a powerful tool, it must be implemented with care. A common critique is that "auto-playing" speech can interfere with the user’s own screen reader, creating a cacophony of overlapping voices. The consensus among experts is that speech synthesis should be user-initiated or used for specific notifications that the screen reader might otherwise miss.

"The goal of the Web Speech API is not to reinvent the screen reader," stated a representative from a leading accessibility consultancy during a 2022 developer conference. "Its true value lies in specialized applications—such as language learning apps, interactive storytelling, and complex data visualization—where the browser can provide context-specific auditory feedback that enhances the primary content."

Analysis of Implications and Future Trends

The implications of widespread speechSynthesis adoption extend beyond simple accessibility. As the "Internet of Things" (IoT) continues to expand, the ability for web-based interfaces to communicate audibly becomes essential for devices without traditional screens, such as smart mirrors or automotive dashboards. The API enables a "Voice First" approach to web development, which aligns with the rising popularity of voice assistants like Alexa and Siri.

However, the rise of this technology also brings security and privacy considerations. Browser vendors have had to implement strict "user activation" requirements to prevent websites from abusing the API. For example, most browsers will not allow a page to trigger speech automatically upon loading; the user must first interact with the page (e.g., a click or a keypress). This prevents malicious actors from using the API for "audio spam" or social engineering attacks.

Looking ahead, the integration of Artificial Intelligence (AI) and Machine Learning (ML) is set to revolutionize the Web Speech API. Currently, most synthesis relies on the device’s built-in voices, which can sound dated. Future iterations of web standards are expected to provide better hooks for cloud-based "neural" voices, which offer human-like inflection and emotional depth. This would transform the speechSynthesis experience from a robotic recitation into a natural conversation, further lowering the barriers to entry for users with disabilities.

Broader Impact on the Digital Ecosystem

The enrichment of the web through the Web Speech API represents a shift in how developers perceive their audience. By moving away from a "visual-only" mindset, the industry is moving toward a more resilient and flexible digital ecosystem. This shift has legal implications as well; as governments worldwide strengthen digital accessibility laws (such as the European Accessibility Act and updates to Section 508 in the U.S.), the use of native browser APIs to ensure compliance will become a standard practice rather than an optional feature.

Furthermore, the educational sector stands to gain immensely. E-learning platforms can utilize speechSynthesis to provide instant pronunciation guides or to read long-form content to students, catering to various learning styles. In professional environments, the API can be used to read out real-time data alerts or stock market updates, allowing users to multitask without losing focus on their primary screen.

In conclusion, the speechSynthesis interface of the Web Speech API is a vital component of the modern developer’s toolkit. While it is not a panacea for all accessibility challenges, its ability to provide programmable, customizable, and native auditory feedback is an essential step toward a truly universal web. By understanding its technical implementation, historical context, and the data-driven need for its adoption, developers can create more inclusive digital environments that serve all users, regardless of their physical abilities. The ongoing refinement of this API, coupled with emerging AI technologies, promises a future where the web is not just seen, but heard and understood by everyone.