The Web Speech API and the Evolution of Browser-Based Accessibility Standards

The landscape of the modern internet is undergoing a profound transformation, moving away from a purely visual medium toward a multi-modal experience that prioritizes inclusivity and seamless user interaction. Central to this evolution is the Web Speech API, a powerful yet often underutilized set of tools that allows developers to integrate speech recognition and synthesis directly into web applications. Among its most potent features is the speechSynthesis interface, a programmatic gateway that enables browsers to convert text into audible speech. While the technology has existed in various forms for over a decade, its current state of maturity and near-universal browser support marks a critical juncture for web accessibility and user experience design.

The core functionality of the speechSynthesis API is accessed through the window.speechSynthesis controller and the SpeechSynthesisUtterance object. By invoking the speak() method, developers can direct the browser to read any arbitrary string of text. A basic implementation requires only a few lines of JavaScript: window.speechSynthesis.speak(new SpeechSynthesisUtterance('Hello world')). Despite this simplicity, the API offers a sophisticated range of controls, allowing for the adjustment of pitch, rate, volume, and even the selection of specific regional voices. This programmatic flexibility provides a unique opportunity to bridge the gap between static content and interactive, audible feedback.

Technical Foundations and Implementation Mechanics

To understand the impact of the speechSynthesis API, one must first examine the technical architecture that supports it. The API operates as an interface between the web application and the underlying operating system’s text-to-speech (TTS) engine. When a SpeechSynthesisUtterance is created, it acts as a container for the text and the metadata associated with how that text should be delivered.

Modern browsers provide a getVoices() method, which returns a list of all available voices on the user’s system. These voices can vary significantly depending on the operating system—macOS, Windows, Android, and iOS all ship with different native voice synthesizers. Some voices are high-quality, neural-network-based samples that sound remarkably human, while others remain more traditional and "robotic." Developers can filter these voices by language or name to ensure the output matches the localized context of the user.

The API is also event-driven. It provides hooks such as onstart, onpause, onresume, and onend, which allow developers to synchronize visual elements on the page with the spoken word. For instance, an educational platform could highlight specific words or sentences as they are being read aloud, creating a synchronized "read-along" experience that benefits both children learning to read and individuals with cognitive disabilities.

A Chronology of Auditory Integration on the Web

The journey toward standardized browser-based speech is a chronicle of shifting priorities within the World Wide Web Consortium (W3C). In the early 2000s, auditory feedback on the web was largely dependent on proprietary plugins, such as Adobe Flash or specialized Java applets. These solutions were often inaccessible, resource-heavy, and prone to security vulnerabilities.

The formalization of the Web Speech API began in earnest around 2012, led by the W3C’s Speech API Community Group. Members from Google, Microsoft, and Mozilla sought to create a standard that would remove the dependency on external plugins. Google Chrome was among the first to provide an experimental implementation in 2013, primarily focusing on speech recognition for search queries.

By 2014, the speechSynthesis portion of the API began to see broader adoption. Safari followed suit shortly after, recognizing the importance of the tool for its mobile ecosystem. The period between 2016 and 2018 saw the stabilization of the API across Firefox and Edge. Today, the speechSynthesis interface enjoys over 95% global browser support, according to data from "Can I Use," making it a reliable tool for production-level web development.

Supporting Data: The Current State of Web Accessibility

The drive toward better speech APIs is fueled by the growing necessity for digital accessibility compliance. According to the World Health Organization (WHO), over 2.2 billion people globally have a near or far vision impairment. For many of these individuals, the internet is navigated through screen readers—specialized software like NVDA (NonVisual Desktop Access), JAWS (Job Access With Speech), or Apple’s VoiceOver.

Recent data from the WebAIM (Web Accessibility in Mind) Million report, an annual evaluation of the accessibility of the top one million homepages, indicates that while progress is being made, significant barriers remain. In 2023, 96.3% of homepages had detectable WCAG 2 failures. While speechSynthesis is not a replacement for full-scale screen readers, it serves as a critical supplementary tool. It allows developers to provide "audio cues" or "spoken hints" for complex interactive elements that might be confusing when navigated via standard ARIA (Accessible Rich Internet Applications) labels alone.

Furthermore, market research into "Voice Search" and "Voice UI" suggests that by 2025, a significant percentage of web interactions will occur via voice. The integration of speechSynthesis allows web applications to participate in this "Voice First" economy, providing feedback in a format that users are increasingly coming to expect due to the ubiquity of smart speakers and virtual assistants.

Distinguishing APIs from Native Assistive Technologies

A common misconception among developers is that implementing speechSynthesis fulfills all accessibility requirements for unsighted users. Industry experts and accessibility advocates emphasize that this is not the case. Native screen readers are highly optimized tools that allow users to navigate the DOM (Document Object Model), identify headings, interact with forms, and understand the structural hierarchy of a page.

The speechSynthesis API, by contrast, is a developer-controlled tool. It is "opt-in" rather than "always-on." Its primary value lies in enhancing the user experience for specific tasks. For example, a news application might use the API to provide an "audio version" of a long-form article, or a navigation app might use it to read out turn-by-turn directions. It is a feature of the application, whereas a screen reader is a feature of the user’s operating environment.

Statements from the W3C’s Web Accessibility Initiative (WAI) suggest that the best use of the Web Speech API is to augment existing accessibility standards. By using the API to read specific, dynamic updates that might not trigger a screen reader’s "polite" announcement, developers can create a more responsive and informative environment for all users, including those with situational disabilities (such as someone who is driving or cooking and cannot look at a screen).

Implementation Challenges: Privacy and the Autoplay Policy

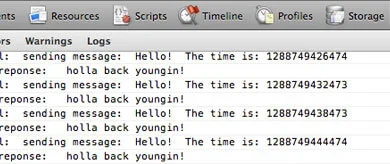

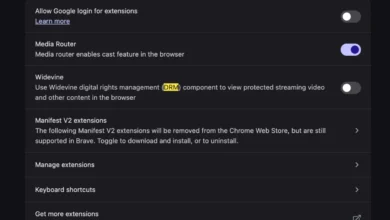

One of the most significant hurdles in the deployment of speechSynthesis has been the industry-wide crackdown on "autoplay" media. In 2018, Google Chrome and other major browsers introduced strict policies to prevent websites from playing audio or video without a prior user gesture (such as a click or a tap).

This change was a response to user complaints regarding intrusive advertisements and "jump-scare" audio. However, it also impacted the speechSynthesis API. A developer cannot simply trigger speech as soon as a page loads; the speak() method will often be blocked or ignored unless it is called within the context of a user interaction.

Chronologically, this led to a shift in how developers design voice-enabled interfaces. Modern best practices now involve clear "Play" buttons or "Enable Audio" toggles. This ensures that the user is in control of their auditory environment, preventing the jarring experience of a browser suddenly speaking in a quiet or public setting.

Broader Impact and Future Implications

The implications of the speechSynthesis API extend far beyond basic text-to-speech. We are currently witnessing a convergence of browser APIs and generative Artificial Intelligence. As Large Language Models (LLMs) become more integrated into web workflows, the need for high-quality, low-latency speech synthesis becomes paramount.

The next generation of the Web Speech API is expected to address current limitations, such as the "robotic" nature of some legacy voices. New proposals are looking at ways to allow browsers to stream high-fidelity neural voices from the cloud or to utilize local hardware acceleration for more natural prosody and intonation.

In the realm of e-learning and global literacy, the impact is already being felt. For non-native speakers, the ability to hear a word pronounced correctly while reading it on a screen is an invaluable learning aid. For the elderly, whose vision may be failing but who are not proficient in complex screen-reading software, simple "click-to-speak" features can restore a sense of digital independence.

Conclusion: The Path Toward an Auditory Web

The speechSynthesis API represents a small but vital piece of the broader push for a more inclusive and interactive internet. While it has remained in the shadows of more high-profile technologies like WebGL or WebAssembly, its utility in creating accessible, user-friendly experiences is undeniable.

As web standards continue to mature, the responsibility lies with developers to utilize these tools thoughtfully. By moving beyond the "robotic" default and integrating speech into the very fabric of web design, the industry can move closer to the W3C’s ultimate goal: a web that is truly for everyone, regardless of how they choose to perceive it. The evolution of the Web Speech API is not just a technical milestone; it is a testament to the ongoing commitment to human-centric digital innovation. Through the strategic use of speechSynthesis, the web is finally finding its voice.