Global Social Media Bans: A Shifting Landscape for Users and Marketers

The digital world, once a boundless frontier, is increasingly becoming a landscape of regulation. As concerns over child safety, mental well-being, and the pervasive influence of online content escalate, governments worldwide are enacting or considering significant restrictions on social media access for minors. This growing wave of legislation presents a complex challenge for social media platforms, marketers, and a generation of digital natives.

The intensity of global crises, from political instability to climate change, coupled with the curated perfection often presented on social media, has created a potent mix of psychological strain. Consumers, increasingly aware of these "dark sides," are voicing support for measures designed to protect vulnerable populations. A Sprout Social Q1 2026 Pulse Survey revealed that 68% of consumers back social media bans for children, with parents of young children showing the strongest inclination towards such policies. This public sentiment is a significant driver behind legislative action, pushing lawmakers to consider their responsibility in safeguarding youth online.

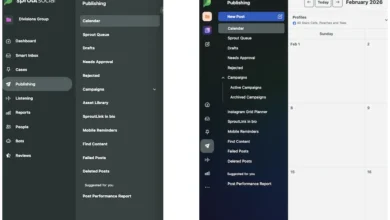

For social media marketers, this evolving regulatory environment translates into heightened stress and increased brand safety risks. The fragmented nature of these bans, varying by country and even by state within nations, complicates strategies for global brands seeking to maintain a consistent presence and reach. The challenge lies not only in understanding and complying with a patchwork of rules but also in adapting content strategies and operational workflows to navigate these new restrictions efficiently.

This article provides an in-depth look at the current and proposed social media bans, exploring their implications for both users and the marketing industry, and offering insights from industry experts on how to adapt in this rapidly changing digital ecosystem.

The Global Surge in Social Media Restrictions for Minors

The trend towards restricting minors’ access to social media is not confined to a single region; it has become a global phenomenon, with diverse approaches and timelines.

Asia-Pacific: A Patchwork of Protections

Australia enacted one of the most stringent laws in late 2024, prohibiting children under 16 from using social media or creating accounts. Platforms such as TikTok, Facebook, Snapchat, Reddit, X (formerly Twitter), and YouTube are affected. The onus is placed on these networks to verify ages, utilizing methods like ID checks, behavioral signals, and biometrics, raising privacy concerns. Failure to comply can result in fines of up to AUD $50 million. Within three days of its December 10, 2025, implementation, the Australian government reported the deactivation or restriction of 4.7 million accounts belonging to individuals under 16. However, the effectiveness of these measures in truly limiting teen access remains a subject of debate.

Indonesia followed suit in late March 2026, implementing a ban for users under 16. Platforms like Facebook, Instagram, TikTok, YouTube, Threads, X, Big Live, and Roblox are included. While enforcement will be gradual, platforms must adhere to government-mandated age verification standards, or face substantial fines and potential nationwide bans.

In Malaysia, the Online Safety Act, effective January 1, 2026, raised the minimum age for social media use to 16. Social networks are responsible for implementing these restrictions through electronic know-your-consumer (eKYC) checks, requiring official ID verification at registration. WhatsApp, Telegram, Facebook, Instagram, TikTok, and YouTube are among the impacted platforms.

Other Asia-Pacific nations are also exploring similar measures. New Zealand is considering a ban for users under 16, though specific platforms and enforcement mechanisms are still under deliberation. The Philippines is also contemplating a ban for those under 16, placing the responsibility for enforcement on social media networks.

Europe: A Coordinated, Yet Fragmented Approach

In France, lawmakers approved a bill in early 2026 to ban social media for children under 15, with the Senate adopting the measure in March 2026. Expected to take effect in September 2026, coinciding with the start of the school year, the bill’s final implementation could be subject to Senate amendments. While the specific platforms will be determined by France’s media authority, the law is expected to mirror Australia’s model, potentially impacting Snapchat, Instagram, TikTok, and X. The European Commission is tasked with enforcing this ban, but clarity on compliance with EU accuracy and privacy standards is still developing.

Across the European Union, member states are independently setting their own age limits, leading to a fragmented regulatory landscape. However, a coalition has emerged to foster a unified approach, and the European Commission is exploring a bloc-wide age verification app. Several countries are already progressing with their own legislation:

- Austria is considering a ban for users under 14, with specific platform details to be finalized by June 2026.

- Denmark aims to ban social media for children under 15, with enforcement potentially relying on European Commission-backed age verification applications.

- Greece has introduced a ban for users under 15, requiring platforms to verify ages via the "Kids Wallet" app, with fines up to 6% of global revenue for non-compliance.

- Italy is moving forward with a ban for those under 15, utilizing a "mini-national portfolio" integrated with the EU’s digital identity system for age verification.

- Norway is proposing a ban for users under 15, pending public consultation on what constitutes a "social media platform."

- Poland plans to ban social media for users under 15, with enforcement responsibility expected to fall on platforms.

- Portugal has approved restrictions, barring users 13 and under from social media and requiring parental consent for those aged 13-16, enforced through the national Digital Mobile Key system.

- Slovenia is implementing a ban for users under 15, targeting major platforms with user-generated content.

- The United Kingdom is piloting a six-week program to assess the feasibility of age-based access restrictions, with potential impacts on platforms like Instagram, Snapchat, and TikTok.

The United States: A State-by-State Approach and Legal Challenges

In the United States, the focus has shifted to state-level legislation addressing minors’ social media use. While a federal TikTok ban related to data security was overturned, several states have implemented their own restrictions:

- Florida prohibits social media accounts for users under 14, requiring parental consent for 14-15 year olds. Platforms must use third-party age verification software and face fines up to $50,000 per violation.

- Mississippi requires parental consent for users under 18 and mandates the blocking of "harmful" material from minors. Age verification via government-issued ID is required, with penalties of $10,000 per infraction for non-compliant platforms.

- Tennessee mandates parental consent for users under 18, with specific compensation requirements for minors frequently appearing in monetized content. Platforms must verify age, obtain consent, and allow parental supervision.

- Virginia limits social media usage for users under 16 to one hour per day per platform, requiring "commercially reasonable methods" for age determination and access restriction.

However, legislative efforts in states like Arkansas, Georgia, Louisiana, and Ohio have been struck down by courts due to vague terms and alleged violations of constitutional rights, including freedom of speech. Meanwhile, California, Kentucky, Massachusetts, and North Carolina are among the states actively pursuing new legislation, building on recent verdicts against tech giants like Meta and Google.

Latin America and SWANA: Emerging Regulatory Frameworks

In Latin America, Brazil is requiring users under 16 to link social media accounts to parental guardians, with potential fines nearing $10 million for platforms failing to implement effective age verification. Ecuador is considering a ban for users under 15, impacting any social media network allowing public content sharing and messaging.

In the Southwest Asia and North Africa (SWANA) region, Turkey is planning to ban social media for users under 15, requiring age verification and parental controls on platforms like Facebook, Instagram, TikTok, and YouTube. The UAE has implemented rules requiring parental consent for data collection for users under 13 and blocking high-risk content for those under 16, with fines up to $32 million for non-compliance.

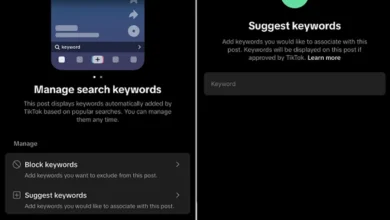

Platforms Respond: Self-Regulation and Evolving Features

In response to mounting legislative pressure and consumer demand, social media platforms are proactively updating their functionalities. The Sprout Social Q1 2026 Pulse Survey indicated that consumers are more inclined to support parental consent measures and age verification technology over outright bans. In line with this sentiment, many platforms now offer enhanced parental controls, including:

- Family Center features: Providing tools for parents to monitor screen time, manage content, and review privacy settings.

- Parental consent mechanisms: Requiring explicit parental approval for account creation or data usage by minors.

- Age verification technologies: Employing a range of methods, from self-reporting to more advanced biometric and ID-based systems, to ascertain user age.

- AI-driven content filtering: Utilizing artificial intelligence to detect and restrict age-inappropriate content and protect younger users from harmful interactions.

Preparing for the New Era of Social Media: Strategies for Brands

The evolving regulatory landscape and shifting consumer behavior necessitate a strategic recalibration for brands operating in the digital space. Even for brands not directly impacted by bans, a growing segment of the population, particularly Gen Z, is actively reducing screen time for mental well-being, as highlighted by the Sprout Social survey.

Channel Experimentation and Audience-Centricity

With certain platforms becoming legally precarious for reaching younger audiences, brands must explore alternative channels. Sam Morgan-Smith, Head of Social at The Romans, advises a "future-proofing" approach, prioritizing platforms that guarantee verified reach and adapt to age-gating protocols. This includes exploring platforms like YouTube Kids, Discord communities with robust moderation, and emerging youth-safe ecosystems within the gaming space, such as Fortnite. The key is to align marketing efforts with where the target audience can legally and safely engage.

Tiffany Sayers, co-founder at Loft Social, emphasizes platform fluidity among Gen Z, who prioritize experiences over specific applications. Brands should evaluate content fit, consumption patterns, and community behavior rather than solely focusing on trending platforms. This involves a shift towards community building over broadcast and viewing platforms like TikTok as "discovery engines." Sayers posits that "platform agility is the new brand safety."

Age-Aligned Content and Rigorous Briefing

The era of blanket posting is over. Legislation demands a more audience-specific approach to content creation. Content must be "age-aware and legally defensible," requiring a more strategic approach to planning and execution. This involves greater rigor in briefing talent, capturing first-party data with consent, and defining success metrics beyond vanity metrics. Considerations include:

- Age-gated content strategies: Developing content tailored to specific age demographics, ensuring compliance with local regulations.

- Platform-specific content adaptations: Recognizing that content suitable for one platform or age group may not be appropriate for another.

- Transparency in creator collaborations: Ensuring clear disclosure of paid partnerships and influencer activities, especially when minors are involved.

Enhanced Brand Safety Protocols and Governance

Brand safety protocols must evolve beyond platform policies to encompass legal regulations and ethical considerations. This requires embedding internal frameworks for robust governance. Key areas include:

- Tighter briefs with age-appropriate messaging: Developing clear guidelines for content creation that explicitly address age restrictions and messaging.

- Stronger vetting processes: Implementing thorough checks for talent, partners, and user-generated content.

- Content archiving and moderation protocols: Establishing systems for managing and reviewing all published content.

- Legal-reviewed guidelines for promotions: Ensuring that giveaways, calls to action, and user engagement tactics comply with relevant laws.

Morgan-Smith stresses that compliance cannot be an afterthought but must be integrated into the workflow. This involves developing comprehensive governance playbooks that outline permissible activities by age, region, and platform, alongside data protocols for consent, age segmentation, and targeting rules. Regular training for all digital-facing departments on global legislation, such as COPPA in the US and the UK Online Safety Act, is crucial to prevent accidental non-compliance.

Embracing Real-World Immersion

While digital engagement remains vital, brands should also consider the power of real-world activations to complement online efforts, especially as organic social reach becomes more complex. IRL experiences, micro-events, brand installations, and peer-to-peer word-of-mouth can foster deeper connections. User-generated content (UGC) and ambassador-led initiatives, seeded through paid media, can continue to perform even with declining organic reach.

The strategic pivot involves embracing hybrid ecosystems that bridge digital influence with real-world immersion. Online, brands can explore gaming integrations, instant messaging, and youth-safe content platforms. In the physical realm, creative resurgence is evident in university activations, experience-led marketing, and retail theater. This approach isn’t about abandoning digital but about recalibrating to environments where attention, access, and trust intersect.

The Future of Social Media: Evolution, Not Erosion

The increasing regulation of social media platforms marks an evolution, not an erosion, of their influence. As Morgan-Smith notes, compliance challenges signal maturity, transforming social media from a "Wild West" into a regulated media environment akin to television’s past. This presents an opportunity for brands to reimagine their social media strategies, investing in multi-channel ecosystems, building first-party relationships with younger consumers (with consent), and championing network accountability.

Sayers emphasizes that social media continues to deliver ROI across the entire funnel, albeit with a greater need for smarter systems and more intentional content. The conversation needs to shift from "how many likes" to "how many hearts and minds," demonstrating that ethical and compliant social media marketing is not only possible but powerful.

Ultimately, navigating the evolving landscape of social media bans requires agility, foresight, and a commitment to responsible digital practices. As new legislation takes shape and consumer preferences continue to shift, brands that embrace these changes with strategic adaptability will be best positioned to thrive in the future of online engagement.