Amazon Secures Major Meta Deal for Millions of Custom Graviton Chips as the Battle for AI Infrastructure Dominance Intensifies

Amazon Web Services (AWS) has reached a landmark agreement with Meta Platforms to deploy millions of its homegrown Graviton central processing units (CPUs) to bolster Meta’s expanding artificial intelligence infrastructure. Announced on Friday, the deal represents a significant strategic victory for Amazon as it seeks to prove the efficacy of its custom-designed silicon in an era increasingly dominated by specialized AI hardware. The partnership centers on the latest generation of the AWS Graviton, an ARM-based processor designed to handle the complex, general-purpose computing tasks that form the backbone of modern AI applications.

While the global AI narrative has largely focused on the massive demand for graphics processing units (GPUs) manufactured by Nvidia, the Meta-AWS deal highlights a critical transition in the industry. As AI moves from the resource-heavy "training" phase to the "inference" and "agentic" phases, the requirements for underlying hardware are diversifying. Meta’s decision to integrate millions of Graviton chips into its operations signals a growing reliance on high-performance, energy-efficient CPUs to manage the sophisticated logic, search functions, and multi-step reasoning required by the next generation of AI agents.

The Strategic Shift from Training to Agentic AI

The hardware requirements for artificial intelligence are currently undergoing a foundational shift. During the initial explosion of generative AI, the industry’s primary focus was on training Large Language Models (LLMs). This process requires the massive parallel processing power of GPUs, which can handle the trillions of calculations necessary to teach a model how to predict the next word in a sequence or identify an object in an image. However, once a model is trained, it enters the inference phase, where it responds to user prompts and executes tasks.

The rise of "AI agents"—software entities designed to perform autonomous tasks such as writing code, managing calendars, or conducting real-time research—has introduced new bottlenecks. These agents require intensive compute workloads that involve real-time reasoning and the coordination of multiple sub-tasks. While GPUs are excellent for the mathematical heavy lifting of neural networks, CPUs like the AWS Graviton are better suited for the "orchestration" of these tasks.

AWS’s latest version of the Graviton was engineered specifically to address these AI-related compute needs. By optimizing the chip for high-speed data processing and lower latency, Amazon has positioned Graviton as an essential component of the AI stack, complementary to, rather than just a replacement for, high-end GPUs. For Meta, which operates some of the world’s most sophisticated social media algorithms and is aggressively pursuing its "Llama" family of open-source AI models, the efficiency of Graviton provides a scalable way to manage the massive influx of AI-driven interactions across its platforms.

A High-Stakes Rivalry in the Cloud

The timing and scale of the Meta-AWS deal are particularly noteworthy given the volatile nature of cloud provider allegiances. Historically, Meta has been a primary customer of AWS, but it has also diversified its infrastructure spend across Microsoft Azure and Google Cloud. In August of the previous year, Meta made headlines by signing a six-year, $10 billion deal with Google Cloud, a move that was seen as a blow to Amazon’s dominance.

By securing this new commitment for millions of Graviton chips, Amazon has effectively reclaimed a larger portion of Meta’s infrastructure budget. Industry analysts noted that Amazon announced the deal just as the Google Cloud Next conference was concluding. This timing has been interpreted by market observers as a strategic "virtual smirk" aimed at Google, which had just spent several days showcasing its own custom AI silicon, including new versions of its Tensor Processing Units (TPUs) and the Axion ARM-based CPU.

The competition between the "Big Three" cloud providers—AWS, Microsoft, and Google—is no longer just about who has the most data centers. It is increasingly about who can provide the most cost-effective and performant custom silicon. By designing their own chips, cloud providers can bypass the high margins charged by external chipmakers like Nvidia and Intel, offering their customers better "price-performance" ratios.

The Evolution of Amazon’s Custom Silicon

Amazon’s journey into custom silicon began in earnest with the acquisition of Annapurna Labs in 2015. Since then, the company has developed three distinct lines of chips: Graviton (CPUs), Trainium (AI training chips), and Inferentia (AI inference chips).

- Graviton (CPU): Now in its fifth generation, the Graviton is an ARM-based processor. Unlike traditional x86 architecture used by Intel and AMD, ARM architecture is known for its high energy efficiency and performance-per-watt. For a company like Meta, which operates at a scale where electricity costs and thermal management are multi-billion dollar concerns, the efficiency of Graviton is a primary selling point.

- Trainium (AI Training): Despite its name, Trainium is used for both training and inference. It is Amazon’s direct answer to Nvidia’s H100 and H200 GPUs.

- Inferentia (AI Inference): Specifically designed to run models after they have been trained, focusing on low latency and high throughput.

The Meta deal specifically focuses on Graviton, allowing Amazon to showcase its CPU prowess. This is a critical proving point, as the Graviton competes directly with Nvidia’s new "Vera" CPU. While Nvidia is the undisputed king of GPUs, its move into the CPU market with ARM-based designs shows that even the market leader recognizes the importance of the CPU in the AI ecosystem. However, a key distinction remains: Nvidia sells its chips to any enterprise or cloud provider willing to pay, whereas AWS chips are proprietary, available only to customers who rent space on the AWS cloud.

The Anthropic Factor and Resource Allocation

The Meta deal also clarifies Amazon’s broader strategy following its massive investment in Anthropic, the creator of the Claude AI model. Earlier this month, Anthropic committed to spending $100 billion over the next decade to run its workloads on AWS. A significant portion of that spending is dedicated to Trainium, Amazon’s specialized AI chip.

With Anthropic "commandeering" a large share of Trainium’s future capacity, Amazon needed to demonstrate that its other silicon offerings were equally viable for major tech players. The Meta deal serves this purpose. While Anthropic focuses on the raw power needed to build and train "frontier" models, Meta is utilizing Graviton to power the massive, distributed logic required to serve AI features to billions of users. This allows Amazon to diversify its success across its entire hardware portfolio rather than being perceived as a one-trick pony reliant on a single partnership.

Financial and Operational Implications

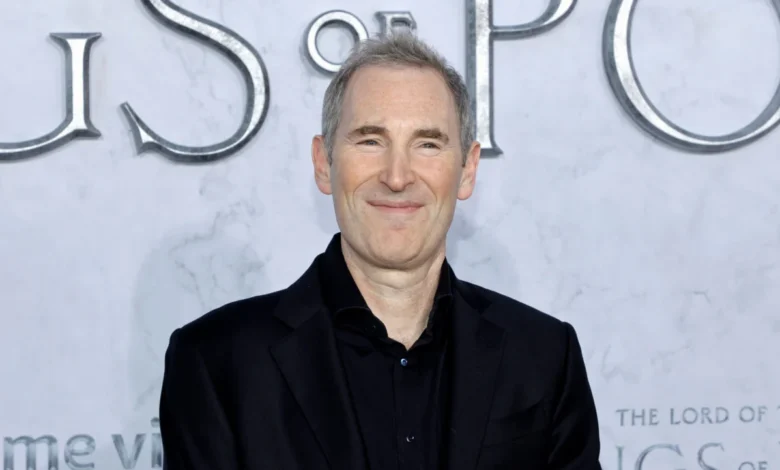

Amazon CEO Andy Jassy has been vocal about his intentions to disrupt the silicon market. In his most recent annual shareholder letter, Jassy took direct aim at incumbents like Nvidia and Intel, stating that enterprises are increasingly desperate for better price-performance ratios. The pressure on Amazon’s internal chip-building team is immense; they must prove that AWS-designed silicon can outperform or at least match the cost-efficiency of industry standards.

For Meta, the deal is a pragmatic move to control operational expenses. As Meta transitions toward "agentic" AI—where AI is not just a chatbot but a tool that performs actions—the sheer volume of compute required is expected to grow exponentially. By locking in a deal for millions of chips, Meta ensures it has the specialized capacity needed to stay ahead of competitors like TikTok and ByteDance, which are also investing heavily in AI-driven content recommendation and generation.

Chronology of the AWS Silicon Strategy

To understand the significance of the Meta deal, one must look at the timeline of Amazon’s hardware development:

- 2015: Amazon acquires Annapurna Labs, signaling its intent to build custom hardware.

- 2018: AWS launches the first-generation Graviton chip, the first major ARM-based CPU for the cloud.

- 2020: Graviton2 is released, offering a 40% improvement in price-performance over comparable x86 instances.

- 2021: AWS introduces Trainium, targeting the AI training market dominated by Nvidia.

- 2023: Graviton4 and Trainium2 are announced, focusing on memory bandwidth and energy efficiency for LLMs.

- Early 2024: Amazon CEO Andy Jassy emphasizes custom silicon as a cornerstone of AWS’s future growth in his shareholder letter.

- April 2024: Anthropic signs a $100 billion, 10-year deal with AWS, focusing on Trainium.

- Present: Meta signs the deal for millions of Graviton chips, solidifying AWS’s position as a leader in both AI training and general-purpose AI compute.

Broader Impact on the Semiconductor Industry

The Meta-AWS agreement is a clear indicator that the "silicon wars" have entered a new phase. We are moving away from a world where a few chipmakers provide general-purpose hardware to everyone, and toward a world of "vertical integration," where the largest software and cloud companies design the very chips their software runs on.

This trend poses a long-term challenge to traditional semiconductor giants. If the largest buyers of chips (Meta, Google, Microsoft, Amazon) all become chip designers themselves, the addressable market for external vendors could shrink, or at the very least, become significantly more competitive. Furthermore, the focus on ARM architecture for data centers is a validation of the technology’s ability to handle high-performance workloads, further eroding the historical dominance of the x86 architecture.

Conclusion: A New Era of AI Infrastructure

The deal between Amazon and Meta is more than just a supply contract; it is a blueprint for the future of AI infrastructure. By betting on Graviton, Meta is acknowledging that the future of AI isn’t just about massive GPUs, but about the intelligent, efficient orchestration of tasks that only a modern, optimized CPU can provide.

For Amazon, the deal provides the ultimate validation of its long-term investment in Annapurna Labs and custom silicon. As AI agents become more prevalent in everyday life—handling everything from customer service to complex software engineering—the hardware they run on will become the most valuable real estate in the world. With Meta now firmly integrated into the Graviton ecosystem, Amazon has secured a front-row seat in the next chapter of the digital revolution.