Unlocking Latent Value: WordPress Aggregator Sites Emerge as Critical Data Pipelines for AI Agent Ecosystems

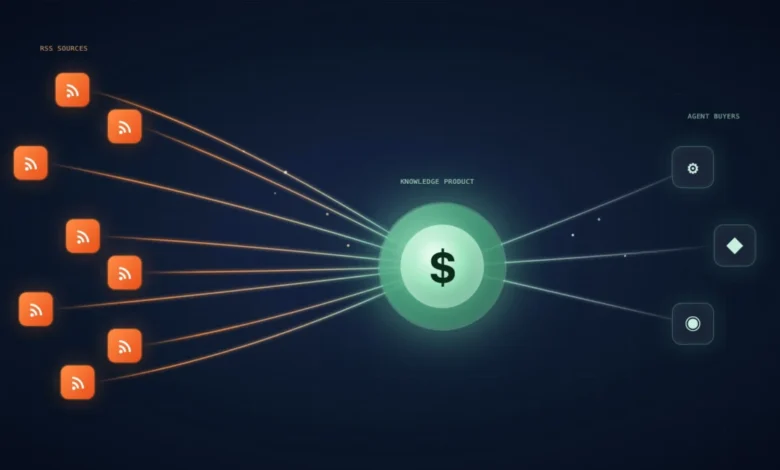

A significant, yet largely unacknowledged, asset resides on the servers of many WordPress aggregator site owners, poised to generate a novel revenue stream. Over the past year, a distinct and rapidly growing audience has quietly integrated itself into the digital ecosystem: AI agent builders. These innovators, engaged in constructing sophisticated automated research assistants using platforms like n8n workflows, Make scenarios, Claude instances, custom MCP servers, and bespoke Python scripts, are actively seeking highly specific, niche-focused information feeds that can be seamlessly integrated into their AI setups. The fundamental challenge they face stems from the inherent obsolescence of large language models (LLMs) at the moment of their deployment; off-the-shelf solutions consistently fail to provide the real-time, current data essential for tasks dependent on recent events.

For nearly a decade, WordPress aggregator sites have meticulously gathered and presented precisely this kind of current information to human readers. Unbeknownst to many of their operators, these same sites now cater to a secondary, technologically advanced audience they were never explicitly designed for. The industry at large within the WordPress community has largely overlooked this emerging opportunity, creating a significant first-mover advantage for early adopters before larger entities recognize and capitalize on the trend. This represents a pivotal moment for existing aggregator site owners to reposition their digital assets as invaluable knowledge sources for the burgeoning AI economy.

The Inherent Value of WordPress Aggregators as AI Knowledge Hubs

At its core, a WordPress aggregator site, especially one built using robust plugins like WP RSS Aggregator, functions as an advanced data processing engine. A closer examination of its operational mechanics reveals a system perfectly suited to the demands of AI agents. Such a system is designed to fetch content from multiple external sources on a predefined schedule, intelligently parse incoming data, and gracefully handle errors such as broken feeds. This foundational infrastructure is not merely a display mechanism for human consumption; it is a meticulously engineered data pipeline.

Key features of these platforms further enhance their utility for AI. Overlapping entries from various sources are automatically deduplicated, preventing redundant information and conserving processing resources. Configurable keyword filters efficiently strip out irrelevant content, ensuring a high signal-to-noise ratio. Moreover, incoming content is systematically categorized and tagged, enabling the creation of topically organized archives. Crucially, each section of these archives publishes its own clean, structured RSS output, making it readily consumable by external systems. Over time, this sophisticated aggregation process culminates in a searchable, highly structured, and continuously updated library of niche-specific information. This architecture aligns almost perfectly with the requirements of an AI agent seeking a reliable and current knowledge source. The fundamental content published by these sites requires no significant alteration; the only substantive difference lies in the nature of the entity consuming the feed.

The Critical Need for Fresh, Curated Data in AI Agents

The necessity for fresh, curated RSS feeds by AI agents is rooted in a fundamental limitation of all large language models: the "knowledge cutoff." Every LLM, regardless of its sophistication, is trained on a dataset that is inherently static and becomes outdated shortly after its release. Depending on the vendor and model version, this training data can be anywhere from three months to two years old. While this historical data suffices for general reasoning tasks and answering queries about established facts, its utility rapidly diminishes when confronted with questions about recent developments.

Consider the practical implications of an AI agent operating with an outdated knowledge base. An SEO agent, for instance, might be tasked with providing strategic advice but remains unaware of the latest Google core algorithm update, leading to recommendations that are at best ineffective and at worst detrimental. Similarly, a competitor-research agent could confidently present information about a rival product that was discontinued six months prior, undermining the client’s trust and leading to flawed business decisions. In such scenarios, the agent’s reasoning, while logically sound based on its internal data, is built upon an unreliable and obsolete foundation, rendering its outputs inaccurate and untrustworthy in real-world applications.

AI builders are acutely aware of these limitations and have explored various methods to overcome them, each with its own set of drawbacks. One common approach involves bolting live web search capabilities onto the agent. While providing real-time access, this method is often slow and resource-intensive at scale, incurring significant operational costs. Furthermore, search results are inherently influenced by search engine ranking algorithms, which may prioritize popular content over genuinely useful, niche-specific information. Another strategy involves developing custom web scrapers. These bespoke solutions are fragile, prone to breaking whenever a source website undergoes a redesign or implements new bot-detection mechanisms, requiring constant maintenance. A less efficient but sometimes employed tactic involves subscribing agents directly to dozens of raw, unfiltered RSS feeds. This often results in a deluge of duplicate content and low-signal noise, consuming valuable processing tokens and diminishing the agent’s efficiency.

A fourth, largely untapped, and highly effective option exists: leveraging a curated RSS hub built on WordPress. This approach transforms an existing aggregator site into a sophisticated data delivery system, offering clean, categorized feeds that AI builders can directly integrate into their agents. This method circumvents the issues of obsolescence, cost, fragility, and noise inherent in other solutions, providing a streamlined and reliable source of current information.

Monetization Strategies for WordPress Aggregators in the AI Era

For operators who have traditionally managed aggregator sites for SEO traffic and display ad revenue, this emerging AI agent audience represents a powerful additive revenue stream. Existing SEO strategies and advertising models can remain entirely unchanged. The core difference is that the meticulously curated RSS feeds, initially published for human consumption, now simultaneously serve as a valuable product for a distinct and high-value secondary audience. Several distinct pathways exist for monetizing this capability:

Selling Subscription Access to Curated Feeds

The most direct monetization strategy involves offering subscription-based access to highly curated feeds. Technically, this can be achieved with relative ease. A private URL, secured by a unique token embedded in the query string and enforced through a server-side rule (e.g., via Cloudflare or a small PHP script), can be set up on an existing WordPress installation within minutes. Each subscriber receives a unique token, which effectively becomes the product being sold.

Consider a hypothetical scenario: a WordPress aggregator specializing in a focused "200-source crypto hub" charges $49 per month for access. If fifty AI agent builders subscribe, this single niche hub could generate approximately $2,450 in monthly recurring revenue. This revenue is largely incremental, as it leverages existing infrastructure and content curation efforts. Scaled across a portfolio of several such niche-specific hubs, this model can rapidly evolve into a substantial and consistent revenue line, significantly enhancing the overall profitability of the aggregator business.

Building and Selling Websites as Knowledge Bases

The landscape of buyers interested in aggregator sites is undergoing a transformation. Historically, these sites were primarily sought by SEO arbitrageurs looking to leverage traffic for ad revenue. However, with the rise of AI agents, a new class of buyer has emerged: organizations and individuals seeking ready-to-deploy knowledge assets. These buyers are less interested in conventional SEO metrics and more focused on the quality, currency, and structure of the data contained within the site. An aggregator site that has been meticulously curated and maintained becomes a highly attractive acquisition target, valued for its established data pipeline and organized information architecture, which can be immediately integrated into larger AI-driven projects.

Running Proprietary Data Pipelines

An aggregator site owner possesses a unique advantage: direct ownership of the data pipeline. This internal control significantly shortens the path to developing proprietary AI products. By owning the source of curated, real-time data, the operator can build their own AI agents or applications on top of this foundation, without the need to license external data feeds or contend with the complexities of third-party integrations. Furthermore, the operator’s inherent understanding of the niche covered by their aggregator site provides invaluable insight into the specific questions and challenges an AI agent should be designed to address, facilitating the development of highly effective and relevant AI solutions.

Creating White-Labelled Hubs for Agencies

Another lucrative avenue involves offering white-labelled, custom-curated data hubs to agencies. Many digital agencies and consulting firms are increasingly integrating AI tools into their service offerings, requiring specialized and proprietary data feeds. These agencies are often willing to pay a premium for custom-tailored feeds that seamlessly plug into their internal AI systems, providing a significant competitive edge. The margins on such white-label services are typically much healthier than those derived from traditional display advertising. Moreover, this model creates a more robust and defensible business moat. The unique editorial judgment and meticulous curation embedded within the data pipeline are difficult for competitors to replicate quickly, establishing a sustainable advantage rooted in human expertise and discernment. Beyond these direct monetization paths, simply establishing an aggregator site as the definitive reference source within a particular niche offers significant long-term strategic value and first-mover advantage, as many categories remain wide open for leadership.

Step-by-Step Guide: Building a Curated SEO Feed for AI Agents

To illustrate the practical application of this concept, let’s outline the process of packaging an SEO knowledge hub and selling access to AI agent builders. SEO is an ideal niche for this initial venture due to its established ecosystem of tools, significant budget allocation, and an audience already highly accustomed to working with AI technologies. WordPress plugins in the SEO space are, in fact, already being reshaped by AI integration.

Step 1: Strategic Source Selection for Your Niche

The initial step involves meticulously selecting the right sources, a process familiar to any experienced aggregator operator. For an SEO hub, a robust shortlist would naturally include:

- Daily News: Search Engine Land and Search Engine Roundtable for timely industry news and updates.

- Official Announcements: The Google Search Central blog and the Google Search Status Dashboard for direct communications from Google.

- Expert Commentary: Feeds from prominent figures like Aleyda Solis, Lily Ray, and Glen Allsopp (Detailed) for in-depth analysis and insights.

- Vendor Research: Content from leading SEO tool providers such as Moz, Ahrefs, and SEMrush for data-driven research and product updates.

- Community Signal: The r/SEO subreddit via its RSS endpoint to capture community discussions and emerging trends.

- Multimedia: Selected YouTube channels (via their channel feeds) and podcast feeds with comprehensive show notes to incorporate audio-visual content.

Crucially, the focus here shifts from sheer volume to high-quality signal. Content primarily consisting of affiliate roundups, generic product launches, or "we just launched" press releases should be excluded. The same discerning instincts used to build a high-quality niche site apply, but with an even stronger emphasis on actionable intelligence over marketing noise.

Step 2: Aggregating Feeds within WordPress

Once sources are identified, each RSS feed is added to your chosen WordPress aggregator plugin. Optimal polling intervals should be set between thirty and sixty minutes to ensure timely updates without overloading the server or source feeds. Where licensing terms permit, enabling full-text import is highly recommended, as it provides agents with complete articles for deeper analysis rather than just summaries. For an experienced operator, this stage can be completed efficiently, typically within a few minutes to a couple of hours, depending on the number of sources.

Step 3: Aggressive Content Curation for AI Consumption

This stage is where the operator’s expertise truly translates into a premium product. Human readers possess an innate ability to skim and filter irrelevant information, tolerating a certain degree of "noise" in their feeds. AI agents, however, lack this nuanced discernment. They process every input with equal weight, consuming valuable processing tokens on promotional material or extraneous content. Therefore, for an AI-centric product, curation transcends being merely a "nice-to-have" feature; it becomes the defining characteristic and the primary value proposition.

Implement rigorous keyword filters to automatically discard sponsored posts, deal announcements, generic product launches, and other low-value content. Establish a disciplined category routing system, ensuring that distinct topics such as algorithm updates, technical SEO, AI search, and case studies are directed into their own clean, dedicated buckets rather than coalescing into a generic news dump. For top-tier sources, a manual approval queue can be implemented (a feature often found in advanced aggregator plugins), allowing for human editors to review and approve content before it is published to the premium feed, guaranteeing its pristine quality. The investment in a part-time editor, perhaps thirty minutes a day, transforms the raw aggregated data into a high-value, paid product. This human-driven curation, delivered at machine speed, is a capability that customers cannot easily replicate themselves, making it the core value they are paying for when subscribing.

Step 4: Exposing RSS Feeds and Gating Premium Access

WordPress inherently provides the plumbing for RSS feeds. By default, categories, tags, and even custom post types can have their own RSS feeds (e.g., yourdomain.com/category/seo/feed/). To monetize access, a unique token is dropped into the query string of these feed URLs. This token is then enforced by either a Cloudflare rule or a small piece of PHP code, which verifies the token’s validity before serving the feed. Each subscriber receives a unique, non-transferable token, making the token itself the salable product. This ensures secure, controlled access to the premium, curated content.

Step 5: Seamless Hand-off to the AI Agent Builder

Once the curated, token-gated feed is established, the aggregator operator’s primary task is complete. Regardless of the customer’s chosen AI framework—be it n8n, a Python script running on a cron job, a Cloudflare Worker, an MCP server, LangChain, or a more exotic setup—the integration pattern remains consistent. The agent simply points its RSS reader at one of your category feeds, pulls new items on a daily basis, summarizes them (if necessary), and stores the distilled output in its internal memory or knowledge base. The aggregator operator is not required to engage with the complexities of the agent’s internal workings; their sole responsibility is to publish a clean, reliable, and trustworthy feed for machine consumption.

Common Mistakes to Avoid When Selling RSS Feeds to AI Builders

Several common pitfalls can derail the success of this monetization strategy in its initial stages:

- Selling the Firehose Feed: Offering the raw, unfiltered "firehose" feed instead of carefully curated subsets is a significant error. AI agent builders are highly sensitive to token costs (the computational expense of processing data). An unfiltered feed, laden with irrelevant content, will quickly lead to complaints about excessive token consumption. Always prioritize and sell focused, highly curated feeds, reserving the firehose for internal use.

- Insufficient Curation: What passes as "good enough" for a human audience is rarely sufficient for an AI agent. The entire premise of this product is the quality and precision of the editorial layer. Skipping the aggressive curation pass undermines the value proposition and will lead to an unreliable product.

- Full Articles vs. Summaries: While full-text import is beneficial for deep analysis, not all agents require it for every item. Providing only full articles can again lead to higher token costs for the builder. Offer both full and summarized versions of the feed, clearly flagging the summary feed as the recommended option for most use cases, allowing builders to choose based on their specific needs and token budgets.

- Forgetting Deduplication: Most aggregator plugins include deduplication features out-of-the-box, but it is crucial to verify that this functionality is active. Redundant items from multiple sources waste token budget and degrade the quality of the agent’s input.

- Pricing Per Request: AI agent builders generally dislike variable costs associated with their input data. A flat monthly subscription rate is almost always preferred over a per-request or per-token pricing model, as it provides predictability and simplifies budget management for the customer.

Validating the RSS Feed Product Idea This Week

For existing aggregator site operators, validating this product idea can be a low-effort, high-reward experiment. Select your most meticulously curated category feed, generate a token-protected version of its URL, and share it with a concise pitch in relevant online communities. Suitable platforms include n8n Discord servers, dedicated AI agent subreddits like r/AI_Agents, or forums frequented by developers building automation workflows. The pitch should highlight the feed’s niche focus and its utility as a source of daily, curated information for AI agents.

If even a handful of individuals express interest in a paid tier, it serves as immediate validation of a viable new revenue stream, leveraging infrastructure already in place. If there is no uptake, the operator has gained valuable insights into which niches currently resonate with the AI agent builder audience, information that can be applied to subsequent hub development.

The perception of "WordPress aggregator site" has, for several years, been relegated to a fading SEO play. However, the rapidly expanding AI agent landscape is revitalizing this category for an entirely different reason. The inherent value now lies in its capacity as a robust data pipeline for a new generation of buyers, rather than solely as a traffic generation engine. The economic dynamics of this shift are fundamentally different from the traditional model, and critically, the market remains largely unserved. The untapped potential for aggregator site owners to reposition their assets as indispensable machine-readable products for AI agents is immense. It is imperative for existing operators to explore and define the value of their niche within this evolving ecosystem before external entities capture this significant opportunity.

Disclosure: The author has an ownership interest in WP RSS Aggregator, a plugin relevant to the approach discussed in this article.