Designing for Agentic AI: The Decision Node Audit and the Path to Transparent Intelligence

As artificial intelligence transitions from generative models that simply provide information to agentic systems capable of executing complex tasks autonomously, a significant psychological and technical gap has emerged between user expectations and system performance. This evolution, often referred to as the "agentic shift," allows AI to handle workflows such as processing insurance claims, managing legal contracts, and executing financial trades. However, the autonomy granted to these systems often results in a lack of transparency, leading to what industry experts describe as the "Black Box" or "Data Dump" dilemma. To solve this, a new methodology known as the Decision Node Audit is being implemented by leading design teams to map backend logic to user interfaces, ensuring that AI transparency is treated not as a stylistic choice but as a functional requirement for building trust.

The Problem of Opaque Autonomy in Modern AI

The current landscape of agentic AI is defined by its ability to operate independently over extended periods. Unlike traditional chatbots that provide immediate responses, an agentic AI might spend thirty seconds or several minutes navigating databases, verifying compliance, and calculating probabilities. During this "invisible" phase, users often experience a sense of powerlessness. If a system provides no feedback, users fear it has stalled or "hallucinated." Conversely, if a system provides too much technical data—streaming every API call and log line—users suffer from notification blindness, losing the very efficiency the AI was intended to provide.

Market research indicates that trust is the primary barrier to the enterprise adoption of AI agents. According to recent industry surveys, nearly 70% of business leaders cite "lack of transparency in AI decision-making" as a top concern when deploying autonomous tools. The challenge for designers and engineers is to find the "Goldilocks zone" of transparency: providing enough information to satisfy user anxiety without overwhelming them with noise.

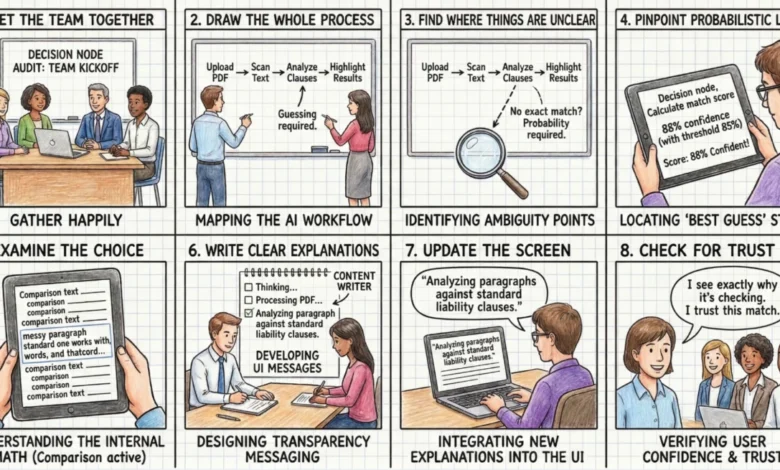

The Decision Node Audit: A New Design Paradigm

The Decision Node Audit is a structured process designed to align an AI’s internal logic with the user’s mental model. This methodology requires cross-functional collaboration between software engineers, product managers, and UX designers to identify every point in a workflow where the AI moves beyond set rules and enters the realm of probability and estimation.

In standard deterministic programming, the logic follows a strict "if-then" structure. Agentic AI, however, operates on "ambiguity points." For instance, an AI reviewing a legal document might find a liability clause that matches a standard template with only 65% certainty. At this moment, the AI must make a choice: flag it for human review, ignore the discrepancy, or attempt to resolve it using contextual clues. The Decision Node Audit identifies these moments of high-stakes uncertainty and marks them as "Transparency Moments"—specific points where the UI must update the user on what the system is doing and why.

Chronology of a Workflow: The Meridian Insurance Case Study

To illustrate the impact of this audit, consider the case of Meridian (a pseudonym for a major insurance firm), which recently overhauled its AI-driven accident claim processing system. The original interface followed a "Black Box" model, where users uploaded documents and saw a generic "Calculating Claim Status" bar for over a minute. User feedback was overwhelmingly negative, characterized by anxiety over whether the AI was properly considering the nuances of police reports.

The team initiated a Decision Node Audit, which revealed three critical probability-based steps in the backend logic:

- Damage Assessment: The AI compared uploaded photos against a database of vehicle types.

- Document Verification: The AI cross-referenced the police report with policy terms.

- Risk Calculation: The AI assigned a payout range based on historical data.

Following the audit, the team transformed these backend steps into visible transparency moments. Instead of a static progress bar, the UI began to communicate the system’s intent: "Assessing vehicle damage from uploaded photos," followed by "Reviewing police report for mitigating circumstances," and finally, "Verifying policy coverage limits." While the processing time remained the same, user confidence scores rose by over 40%. Users reported feeling that the AI was "thinking" and performing valuable work, rather than simply being stuck.

The Impact/Risk Matrix: Strategic Filtering of Information

A common pitfall in designing for AI is the assumption that more information is always better. To avoid the "Data Dump" trap, designers utilize an Impact/Risk Matrix to prioritize which decision nodes should be surfaced to the user. This matrix categorizes AI actions based on two axes: Impact (the significance of the outcome) and Reversibility (the ease with which an action can be undone).

Low Impact / High Reversibility

These are routine tasks such as renaming a file or archiving a non-critical email. For these nodes, a passive notification or a simple log entry is sufficient. The system should prioritize speed and operate autonomously without interrupting the user.

High Impact / Low Reversibility

These are critical nodes where the AI takes actions that are difficult or impossible to undo, such as permanently deleting a database, executing a large financial trade, or sending a final legal draft to a client. For these moments, the audit dictates the use of an "Intent Preview"—a UI pattern that pauses the workflow and requires explicit human confirmation before the AI proceeds.

| Action Type | Impact | Reversibility | Recommended UI Pattern |

|---|---|---|---|

| File Renaming | Low | High | Passive Toast / Log |

| Archiving Email | Low | Low | Simple Undo Option |

| Sending Drafts | High | High | Notification + Review Trail |

| Deleting Server | High | Low | Modal / Explicit Permission |

Qualitative Validation: The "Wait, Why?" Test

Once the decision nodes are mapped and filtered, they must be validated through human behavior. Designers use a protocol called the "Wait, Why?" Test. During usability sessions, users watch an agent complete a task and are encouraged to "think aloud." Whenever a user asks, "Wait, why did it do that?" or "Is it stuck?", it signals a failure in transparency.

In a study involving a healthcare scheduling assistant, researchers found that a four-second pause in the AI’s processing caused significant user distress. Participants were unsure if the AI was checking their personal calendar or the doctor’s schedule. By splitting that four-second wait into two distinct transparency moments—"Checking your availability" and "Syncing with provider schedule"—the team reduced user anxiety and increased the perceived reliability of the tool.

Operationalizing Transparency: Technical and Content Alignment

The implementation of a Decision Node Audit changes the traditional design-to-engineering handoff. It requires a "Logic Review," where engineers confirm that the system can actually expose the internal states the designers wish to show. Often, backend systems are built to return only a general "success" or "error" status. Designers must negotiate with engineers to create "technical hooks" that allow the UI to report specific actions, such as "Scanning for liability risks" versus a generic "Processing" message.

Content design also plays a pivotal role. A developer might write a technically accurate status such as "Executing function 402," which is meaningless to a layperson. Conversely, a designer might write "Thinking," which is too vague. Content designers act as translators, crafting phrases that are both technically grounded and human-friendly. For example, "Comparing local vendor prices to secure your Friday delivery" provides both the action (comparing prices) and the user-centric goal (securing the delivery).

Broader Implications and the Future of AI Trust

The shift toward transparent agentic AI has profound implications for industries governed by strict regulatory frameworks, such as finance, law, and medicine. In these sectors, "explainability" is not just a UX preference but a legal requirement. As AI agents take on more fiduciary and operational responsibilities, the ability to provide an "Action Audit"—a retrospective trail of why certain decisions were made—will become the standard for enterprise software.

Furthermore, this approach addresses the growing phenomenon of "AI Fatigue." By only surfacing high-impact decision nodes and automating low-stakes tasks, companies can ensure that human intervention is reserved for moments that truly require judgment. This "human-in-the-loop" model preserves the efficiency of AI while maintaining the safety and oversight of human expertise.

Ultimately, trust in AI is not an emotional byproduct of a sleek interface; it is a mechanical result of predictable, clear communication. By adopting the Decision Node Audit, organizations can move away from the binary of the Black Box and the Data Dump, creating agentic systems that are not only powerful but also profoundly legible to the people who use them. As AI continues to integrate into the fabric of professional life, the transparency of its "internal math" will be the deciding factor in its success or failure in the marketplace.