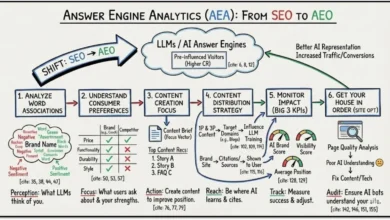

The Evolution of Marketing Analytics: Bridging the Insights Latency Gap through Artificial Intelligence

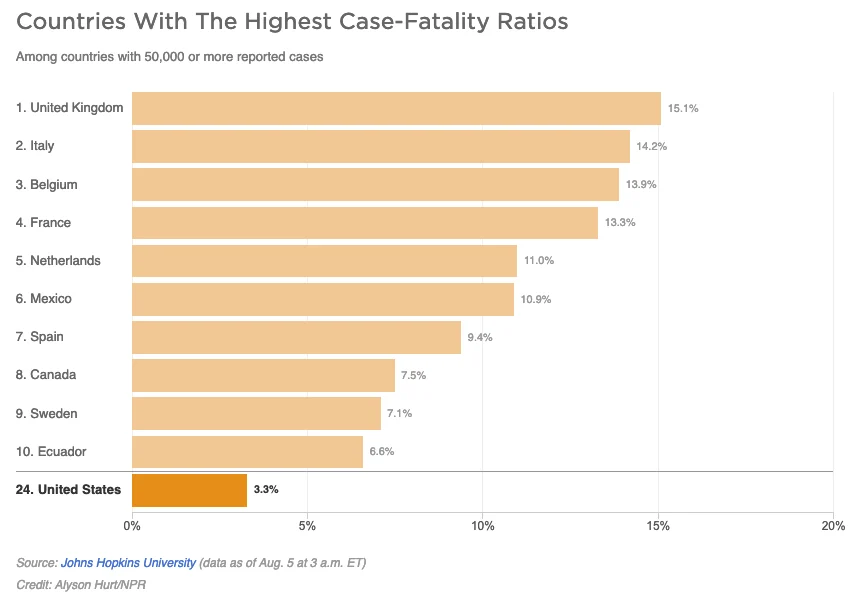

The modern corporate landscape is currently grappling with a paradox where massive investments in data infrastructure frequently fail to yield superior decision-making outcomes. Despite the proliferation of cloud-based business intelligence platforms, unified consumer views, and high-tech "clean rooms," many organizations remain tethered to the "HiPPO" model—where the Highest Paid Person’s Opinion overrides empirical evidence. This disconnect is exacerbated by an adherence to outdated marketing models, such as the traditional "funnel," which persist despite decades of data suggesting that consumer journeys are non-linear and increasingly complex. The primary obstacle facing the modern enterprise is no longer data scarcity, but rather "insights latency"—the time-lag between data collection and the extraction of actionable intelligence.

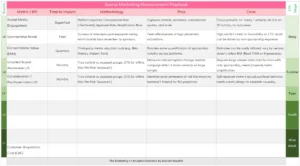

The Structural Failure of Traditional Analytics Workflows

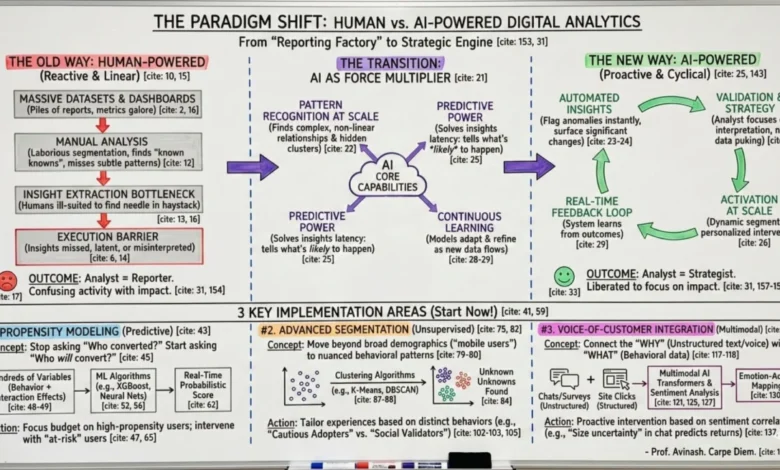

For over two decades, the standard operating procedure for data analytics has followed a linear, reactive path. This traditional workflow typically begins with report generation, where analysts spend significant hours producing standard dashboards. This is followed by manual analysis, a process often limited to "known knowns" and prone to missing subtle, non-intuitive patterns within high-dimensionality data. The third stage, insight extraction, requires analysts to manually identify findings and translate them into context for senior leadership. The final barrier is the "exec last-mile," where data-driven insights must compete with conflicting priorities, often resulting in misinterpretation or a total lack of action.

This human-centric model is increasingly ill-suited for the modern digital environment. Humans are naturally limited in their ability to track hundreds of variables per user engagement or to identify significant anomalies within massive, multi-dimensional datasets. As the volume of data grows, the "needle in the haystack" becomes harder to find, leading to a state of "data puking" rather than strategic activation. Industry experts note that this reactive posture is a leading cause of company obsolescence, as organizations fail to identify and pivot toward emerging trends in real-time.

The Chronology of the 10/90 Rule: From Humans to AI

To understand the current shift in analytics, one must look back at the foundational principles established at the dawn of web analytics. In 2006, the "10/90 Rule" was introduced as a gold standard for investment: for every $100 spent on smart decisions, $10 should go toward tools and implementation, while $90 should be invested in the human analysts who interpret the data. This rule revolutionized the industry by shifting focus from software to soulware.

However, the advent of generative AI and advanced machine learning has necessitated a fundamental update to this principle. The "New 10/90 Rule" posits that the $100 investment should now be split between $10 for brilliant human analytical strategists and $90 for AI activation. This shift does not diminish the value of the human element; rather, it elevates the analyst from a reporter to a strategist. Over time, the total cost of these decisions is expected to decrease to $70 or $80, even as the quality, scale, and speed of intelligence increase exponentially.

Strategic Pillar I: Predictive Propensity Modeling

One of the most transformative applications of AI in analytics is the transition from descriptive to predictive modeling. Traditional analytics focuses on historical data—identifying who converted in the past. AI-driven propensity modeling, however, identifies who is likely to convert in the future. By analyzing thousands of behavioral features—such as scroll depth, product views, cart additions, and visit frequency—Machine Learning (ML) algorithms can assign a real-time probability score to every user.

Several key algorithms have emerged as the backbone of this predictive power:

- XGBoost (Extreme Gradient Boosting): Currently regarded as the gold standard for tabular data, these algorithms excel at conversion prediction by combining multiple weak predictive models into a highly accurate ensemble.

- Random Forest: This approach is particularly effective when analysts need to understand "feature importance," identifying which specific behaviors most strongly predict a conversion.

- Deep Learning: For massive datasets with non-linear relationships, deep learning architectures can uncover hidden patterns that traditional statistical models might overlook.

- Survival Analysis: Originally a medical statistical tool, this is now used to predict not just if a user will convert or churn, but when, allowing for perfectly timed marketing interventions.

Data from recent implementations suggests that propensity modeling can lead to a 35% to 60% improvement in conversion rates for targeted segments and a 20% to 35% reduction in acquisition costs.

Strategic Pillar II: Advanced Algorithmic Segmentation

The practice of segmenting users by broad categories—such as "mobile users" or "logged-in users"—is increasingly viewed as insufficient. Such broad strokes miss the nuanced behavioral patterns that define modern consumer intent. Unsupervised learning algorithms, such as K-Means Clustering and Principal Component Analysis (PCA), allow for the identification of natural clusters within data without predefined categories.

In a recent application for a B2B SaaS client, clustering algorithms analyzed session data across 28 behavioral dimensions. Rather than a simple "free trial" segment, the AI identified distinct groups: "Feature Explorers," "Integration-Focused Users," and "High-Velocity Evaluators." By tailoring the onboarding experience to these specific, algorithmically-derived segments, the company saw activation rates increase by over 25%. Furthermore, this automated approach can reduce the time spent on manual cohort analysis by up to 75%.

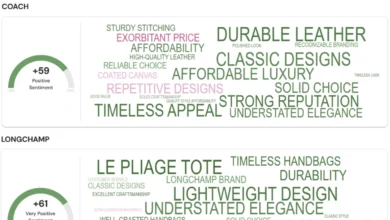

Strategic Pillar III: Integrating Voice of Customer with Behavioral Data

A long-standing challenge in analytics has been the "Trinity" gap—the inability to connect the "what" (behavioral data) with the "why" (customer sentiment). Historically, survey responses, support tickets, and chat transcripts lived in silos separate from website analytics. Modern multimodal AI systems are now bridging this gap by processing structured behavioral data alongside unstructured text and voice data.

Advanced Natural Language Processing (NLP) and sentiment analysis have evolved beyond simple positive/negative labels. They can now detect specific emotions, urgency, and intent. For instance, an e-commerce platform that integrated chatbot transcripts with behavioral data discovered that "technical friction" in the checkout process was a higher predictor of churn than price sensitivity. By addressing these "why" factors in real-time, organizations have seen improvements of up to 12 points in Net Promoter Scores (NPS) and a 25% reduction in cart abandonment.

The Future Landscape: The "Analyst 2028" Framework

As AI assumes control over the mundane aspects of data processing and anomaly detection, the role of the data analyst is undergoing a radical transformation. Industry forecasts suggest that the traditional "analyst-as-reporter" role will likely cease to exist within the next 18 to 24 months. By 2028, the successful analyst will be a "S.H.I.F.T." professional: Strategic, Holistic, Insightful, Future-proof, and Tech-savvy.

This evolution requires a shift in mindset from "insights hunting" to "validation and activation." Instead of spending 80% of their time finding data, analysts will spend 90% of their time determining how to best apply AI-generated insights to business strategy. The goal is a state of "liquid merchandising" and real-time pricing optimization, where the digital experience adapts instantly to the predicted needs of each individual user.

Conclusion and Broader Implications

The integration of AI into marketing analytics represents the most significant paradigm shift since the field’s inception. The organizations that thrive in this new era will not be those with the largest data lakes, but those that can most effectively reduce insights latency. By handing over control of data processing to AI, companies can move from a reactive, historical view of their business to a proactive, predictive one.

The implications extend beyond marketing. Predictive Customer Lifetime Value (CLV) modeling and automated anomaly detection are beginning to influence supply chain management, human resources, and financial forecasting. While the transition requires a significant shift in investment and organizational culture, the potential for exponential growth in decision quality and operational efficiency makes AI-powered analytics an existential necessity for the modern enterprise. As the industry moves toward Artificial General Intelligence (AGI), the foundations laid today in predictive modeling and automated segmentation will determine the market leaders of the next decade.