Can Google crawl your links? Understanding how search engines like Google discover and process your website’s links is crucial for . This exploration delves into the intricate world of web crawling, examining the factors that influence Google’s ability to find and index your content. From the fundamental mechanics of link crawling to the nuances of link structure and attributes, we’ll uncover the secrets behind successful website indexing.

This comprehensive guide unpacks the complexities of link crawling, examining various link types, structures, and attributes, and the impact they have on your website’s visibility. We’ll analyze the role of robots.txt files, link quality, and website structure in guiding Google’s crawlers, and how to optimize your links for maximum crawlability. We’ll also address common issues that can hinder crawling and Google’s policies to ensure you’re building a site Google can easily navigate.

Understanding Link Crawling

Web crawling, a fundamental process in search engine operation, is crucial for discovering and indexing web pages. Understanding how search engines navigate the vast expanse of the internet is key to comprehending how they deliver relevant results. This process hinges heavily on the structure and nature of links connecting different web pages.Search engines use automated programs, often called spiders or crawlers, to systematically explore the World Wide Web.

These automated agents follow links from one page to another, collecting information about the pages they visit. This iterative process allows search engines to build a comprehensive index of the internet’s content. The efficiency of this process, and the accuracy of the search results, depends greatly on the quality and structure of the web’s linking architecture.

Defining Web Crawling

Web crawling, or web spidering, is the automated process by which search engines discover and index web pages. It involves a software program systematically traversing the web by following hyperlinks. This process is essential for search engines to create a searchable index of the internet’s vast content.

Search Engine Spider Indexing

Search engine spiders begin by identifying a starting set of URLs. These might be provided by a seed list, or discovered from existing indexed pages. The spider then follows the links on each page it visits, adding new URLs to its queue for future exploration. This process continues recursively, following links across different websites. Each visited page is analyzed, extracting text, images, and other relevant data.

This data is then processed, organized, and stored in the search engine’s index. The frequency of crawling varies depending on the website’s popularity and updates.

The Role of Links in Crawling

Links are fundamental to the crawling process. They act as pathways, directing the search engine spider from one page to another. The more links a page has, the more opportunities the search engine has to discover new content. The quality and relevance of the links are also important factors in determining the importance of a page.

How Search Engines Discover New Pages

Search engines primarily discover new pages by following links from already indexed pages. This process, known as link crawling, is a fundamental aspect of maintaining a current and comprehensive index of the internet. New sites and pages can be discovered this way, expanding the scope of the search engine’s knowledge.

Different Types of Links

Different types of links have different impacts on how search engines crawl the web. Understanding these distinctions helps in comprehending the intricate web of interconnected pages.

Link Type Impact on Crawling

| Link Type | Impact on Crawling | Example | Explanation |

|---|---|---|---|

| Internal | Facilitates crawling within a website. | A link from a blog post to another blog post on the same site. | Internal links help search engines explore deeper into a website, improving the discoverability of its content. |

| External | Allows crawling across different websites. | A link from a blog post to an article on a different news site. | External links are vital for discovering new content and understanding the relationships between different websites. |

| Broken | May hinder crawling, and indicates outdated or removed content. | A link that points to a non-existent page. | Broken links can lead to wasted crawl time and may suggest the content is no longer relevant. |

Factors Affecting Crawling of Links

Search engine crawlers, also known as spiders or bots, are essential for indexing web pages. Understanding the factors that influence how frequently these crawlers visit a particular link is crucial for search engine optimization (). This knowledge allows website owners to optimize their site for better visibility in search results.Crawling frequency isn’t random; it’s driven by various factors, including the quality of links, website structure, and the search engine’s algorithms.

Wondering if Google can find your website’s links? Of course it can! A crucial aspect of any effective digital marketing strategy, especially for an A&E/C firm, is ensuring your online presence is optimized for search engines like Google. This comprehensive guide will help you craft a plan to get found. Understanding how Google’s crawlers work is fundamental to that strategy, so knowing your links are indexed is key to success.

Understanding these factors empowers website owners to improve their site’s crawlability and consequently its ranking.

Link Quality and Crawling Frequency, Can google crawl your links

Link quality significantly impacts crawling frequency. High-quality links, often originating from reputable and authoritative websites, signal to search engines that the linked page is valuable and trustworthy. Conversely, low-quality links, such as those from spammy or irrelevant sites, may result in decreased crawling frequency, potentially hindering the site’s visibility. This is because search engines prioritize quality over quantity in their indexing process.

For example, a link from a well-established news publication is more likely to trigger frequent crawling than a link from a newly created blog with little to no authority.

Robots.txt Files and Crawling Control

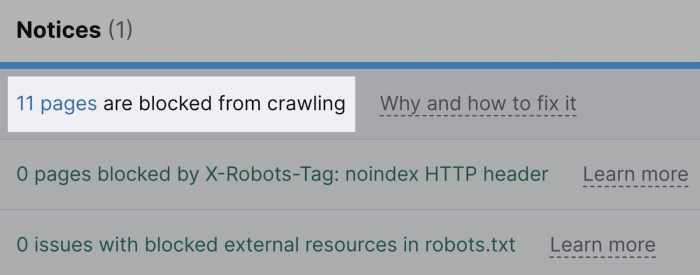

Robots.txt files are crucial for controlling search engine crawlers’ access to specific parts of a website. They provide instructions to crawlers, informing them about which directories or files should not be indexed. Properly configured robots.txt files allow website owners to prevent crawlers from accessing sensitive data or pages not yet optimized for public view. This control is essential to manage crawling frequency and ensure that only relevant content is indexed.

For example, a website might want to prevent crawlers from accessing a development section or a private members’ area.

Website Structure and Crawlability

Website structure plays a significant role in how easily search engine crawlers can navigate and index the site’s content. A well-structured site, with clear navigation and logical organization of pages, allows crawlers to easily find and follow links, leading to more frequent crawling. Conversely, a complex or poorly structured site with broken links or confusing hierarchies might discourage crawlers, potentially leading to less frequent indexing.

Consider a website with a logical sitemap, internal linking structure, and easily navigable menus. This would facilitate the crawling process and ensure thorough indexing.

Static vs. Dynamic Pages

Static pages have fixed content and are delivered directly to the user’s browser. Dynamic pages, however, generate content on the fly based on user input or database queries. Search engine crawlers generally crawl static pages more efficiently than dynamic pages, as the content is readily available. Dynamic pages might require additional processing by the crawler, potentially leading to reduced crawling frequency.

Static pages are indexed faster and require less resource consumption by the search engine.

Page Load Speed and Crawling Efficiency

Page load speed directly impacts crawling efficiency. Slow-loading pages can cause crawlers to spend more time on a single page, reducing the number of pages they can crawl in a given time frame. Faster loading pages allow crawlers to quickly process and move on to other pages, thus increasing the overall crawling efficiency. This is particularly important in competitive online environments, where speed often determines success.

Link Crawling Process Flowchart

The following flowchart illustrates a simplified representation of the link crawling process.

[Diagram/Flowchart](Note: A visual flowchart would be presented here if this were a document that could support images. It would show a step-by-step process, beginning with a seed URL, followed by crawling links, checking for robots.txt, processing the page, adding new URLs to the queue, and continuing until the queue is empty or a maximum depth is reached.)

Link Structure and Crawlability: Can Google Crawl Your Links

A well-structured website is crucial for search engine optimization (). Search engine crawlers, like Googlebot, need to easily navigate your site’s links to understand its content and index it properly. A logical and consistent link structure contributes significantly to a positive user experience and helps search engines understand the relationships between different pages.Proper link structure directly affects how effectively search engines can crawl and index your website.

This, in turn, impacts your website’s visibility in search results. By understanding the nuances of relative and absolute URLs, you can optimize your site’s architecture for better crawling and indexing, leading to improved search engine rankings.

Relative URLs

Relative URLs are paths that are relative to the current page. They are often used for internal links within a website. Using relative URLs is generally efficient for internal linking, particularly within the same domain. This method is preferred because it’s more flexible, as it adapts easily when the website structure changes. When a page’s URL changes, internal links with relative paths don’t need to be updated.

Wondering if Google can find your spreadsheets? Of course, Google’s crawlers are pretty thorough, so they’ll likely index your files if they’re publicly accessible. If you’re looking to sell those valuable Excel or Google Sheets templates, consider how to best present them within a secure, user-friendly WordPress site. This guide will show you how to sell excel or google spreadsheets in wordpress , from creating a sales page to handling downloads.

Ultimately, optimizing your site for Google’s crawlers is key to driving sales.

Absolute URLs

Absolute URLs are complete URLs, including the protocol (http or https), domain name, and path. Using absolute URLs ensures that the crawler can locate the linked page regardless of the current page’s location. This approach is necessary for external links and is recommended for links to resources outside the website. They also provide clarity and maintainability, especially for links that might change or be used across different websites.

Good and Bad Link Structures

Proper link structures contribute significantly to a website’s performance. Using relative URLs for internal links and absolute URLs for external links is a standard practice.

- Good Link Structure Example: Using relative URLs for internal navigation makes the website more flexible and maintainable. For instance, linking to the “about us” page from the homepage using a relative path (`/about-us`) makes it easy to move the page without affecting the links.

- Bad Link Structure Example: Using absolute URLs for internal links is redundant and can hinder . For instance, using the full URL (`https://www.example.com/about-us`) for every internal link on the site adds unnecessary complexity and redundancy.

Optimizing Links for Improved Crawling

Optimizing links for improved crawling involves several best practices:

- Consistent Structure: Using a consistent link structure helps search engine crawlers understand the site’s hierarchy and relationships between pages. This helps them crawl the website more efficiently.

- Descriptive Anchor Text: Using descriptive anchor text for internal links helps search engines understand the context of the linked page. For example, using “contact us” instead of a generic link improves understanding.

- Avoiding Broken Links: Broken links can negatively impact and user experience. Regularly checking and fixing broken links is crucial for a healthy website.

Link Structure Examples

The table below showcases examples of various link structures and their impact on crawling.

Link Issues and Crawling

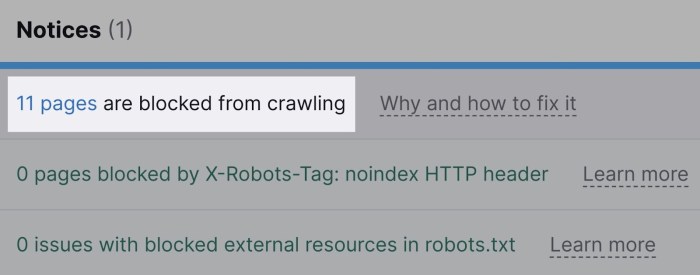

Understanding how search engine crawlers navigate your website’s links is crucial for maintaining good search engine rankings. A seemingly minor issue with a link can significantly impact a site’s visibility and organic traffic. These issues, ranging from simple broken links to complex server errors, can hinder the crawling process and ultimately affect your site’s performance in search results.Common link problems can prevent search engine crawlers from fully indexing your website.

These problems can stem from various sources, including broken links, redirect chains, and server errors. Understanding these issues and their impact on crawlability is essential for optimizing your website’s performance in search engine results pages (SERPs).

Broken Links

Broken links are a frequent problem that significantly impacts crawlability. They occur when a link points to a resource that no longer exists or has been moved without a proper redirect. Crawlers encounter these links, record them as errors, and potentially move on, skipping important content. This ultimately results in a less comprehensive index of your site’s content for search engines.

Wondering if Google can find your website links? Understanding how buyers make purchasing decisions is key to successful marketing, and that includes how search engines like Google index and crawl your site. This is closely tied to how buyers buy, as highlighted in this insightful article on the three trends in buyer behavior that tech companies need to know, how buyers buy 3 trends in buyer behavior that every technology and software firm should consider.

Ultimately, optimizing your site for both human visitors and search engines is crucial for visibility and success.

Redirect Chains

Redirect chains occur when a user or crawler is directed from one URL to another, and then to another, and so on, until they reach the final destination. While redirects are sometimes necessary, excessively long chains can confuse crawlers and lead to indexing issues. Long chains make it difficult for search engines to properly understand the destination of the original link and can cause delays in the crawling process.

Server Errors

Server errors, such as 404 Not Found, 500 Internal Server Error, or 503 Service Unavailable, can halt the crawling process altogether. These errors indicate problems with your website’s server or the specific resource being requested. Search engine crawlers encounter these errors, record them, and potentially skip the entire section of your website or even the entire website.

Impact on Search Engine Rankings

Crawl errors significantly impact search engine rankings. When crawlers encounter errors, they record these as issues with your site. Search engines use this data to determine the overall health and usability of your website. This, in turn, impacts the site’s ranking in search results. A high number of crawl errors can negatively affect your site’s visibility and ultimately lead to a lower ranking.

Examples of Common Link Issues and Their Impact

Imagine a blog post with a link to a previous article. If that previous article has been deleted, the link becomes broken. Crawlers encounter this error and move on, missing out on the opportunity to index the relevant content. This could potentially result in a lower ranking for the entire blog post. Similarly, a long redirect chain from a product page to a shopping cart, followed by a checkout page, might confuse crawlers.

They might struggle to identify the ultimate destination, resulting in inaccurate indexing. Server errors, such as a 500 Internal Server Error on a crucial product page, can prevent crawlers from accessing that information entirely. The consequences of such issues range from reduced visibility in search results to a complete absence from the index.

Table of Link Issues

| Issue Type | Description | Impact on Crawling | Example |

|---|---|---|---|

| Broken Link | A link points to a non-existent or removed resource. | Crawler encounters an error, potentially skipping the linked content. | A link to a deleted blog post. |

| Redirect Chain | A series of redirects that take the crawler through multiple URLs before reaching the destination. | Crawlers might struggle to understand the final destination or experience delays. | A product page redirecting to a shopping cart, then to checkout, then to a thank-you page. |

| Server Errors | Errors like 404, 500, or 503 encountered by the crawler. | Crawler encounters an error, potentially skipping the entire section or page. | A 500 Internal Server Error on a vital page. |

Google’s Crawling Policies

Google’s crawling policies are crucial for maintaining a healthy and discoverable web. These policies dictate how Google’s search bots, also known as crawlers, interact with websites, ensuring a fair and consistent indexing process. Understanding these policies is essential for website owners to optimize their site for search engine visibility and avoid penalties.Google’s approach to crawling is based on a principle of respect for website owners’ decisions.

They strive to crawl websites efficiently and responsibly, but they also need to ensure that their systems don’t become overwhelmed or misused. Therefore, guidelines are established to maintain a balanced and effective search experience.

Google’s Guidelines for Website Owners

Website owners are expected to adhere to certain guidelines to facilitate efficient crawling and indexing. These guidelines aim to maintain a positive user experience by preventing spam and ensuring quality content is ranked. A core principle is that websites should be structured and maintained in a way that is transparent to the crawlers.

- Respect robots.txt: The robots.txt file is a crucial tool for website owners. It dictates which parts of a website Googlebot (and other crawlers) should or should not access. Proper use of robots.txt helps avoid overwhelming the Googlebot and ensures that sensitive or non-public areas of a website are protected. A well-structured robots.txt file guides crawlers, preventing them from accessing inappropriate or unnecessary content.

- Avoid cloaking and doorway pages: Cloaking and doorway pages are deceptive techniques used to present different content to search engines than to users. Google actively combats these tactics, as they undermine the search experience. Websites using such methods are likely to face penalties, including reduced visibility or removal from search results.

- Prioritize high-quality content: Google prioritizes websites with original, valuable content. Focus on providing relevant information to users. Avoid stuffing or other practices that manipulate search engine results. This ensures that the content is useful to users and accurately reflects the website’s purpose.

Implications of Violating Policies

Violating Google’s crawling policies can have significant repercussions for website owners. These consequences range from reduced visibility in search results to complete removal from the index. Understanding the implications is vital for maintaining a positive online presence.

- Penalty of reduced visibility: Websites that repeatedly violate Google’s policies may see a decrease in their search engine ranking. This can lead to a decline in organic traffic, impacting the website’s overall performance and profitability.

- Manual action: Severe violations can lead to manual actions by Google. This involves a more in-depth review of the website, and potential penalties may include removal from search results. These actions usually result from deliberate violations of policies.

- Algorithm changes: Google frequently updates its algorithms. Changes in algorithms can impact websites that have implemented practices that manipulate search results. These changes can be difficult to adapt to, potentially leading to a temporary drop in ranking.

Examples of How Google Handles Various Link Scenarios

Google’s crawling policies are applied across a wide range of scenarios. Understanding how Google handles different link situations can help website owners avoid potential issues.

- Broken links: Google’s crawlers encounter broken links regularly. While they don’t penalize websites automatically for broken links, a high volume of broken links can indicate issues with website maintenance. This can affect the overall crawl efficiency.

- Link spam: Spammy links, often created to artificially inflate a website’s authority, are actively identified and penalized by Google. These links are typically removed from the index, preventing them from affecting search results.

- Link exchanges: Exchanging links with unrelated websites can be perceived as manipulative. Google can recognize these practices and potentially reduce the ranking of the affected sites. Focus on creating natural and relevant links.

Google’s Crawling Best Practices

Implementing these best practices can significantly enhance the efficiency and effectiveness of Google’s crawling process. These are vital for maintaining a positive relationship with Google’s search algorithms.

- Maintain a well-structured website: Use a clear sitemap and a logical site architecture to help Google crawlers understand and navigate your website efficiently.

- Ensure website speed and performance: A slow website can negatively affect the crawling process. Prioritize fast loading times to ensure a positive user experience.

- Regularly update and maintain your site: Regularly update your website content to keep it fresh and relevant. Ensure broken links are repaired promptly to avoid issues.

Conclusive Thoughts

In conclusion, understanding how Google crawls your links is paramount to successful . By optimizing your website’s structure, links, and attributes, you can significantly improve your website’s visibility in search results. This comprehensive exploration of link crawling provides a solid foundation for anyone looking to enhance their website’s search engine performance and attract more organic traffic. By adhering to best practices, you can ensure Google’s crawlers easily access and index your valuable content.